Anthropic has implemented a significant policy shift that requires all Claude users to make a crucial decision about their conversation data by September 28. This change fundamentally alters how the company handles user interactions and AI training processes.

Anthropic AI Training Policy Changes Explained

Previously, Anthropic maintained a strict policy of automatically deleting consumer chat data within 30 days. However, the company now seeks permission to use conversations and coding sessions for AI model training. Users who do not opt out will have their data retained for five years instead of the previous 30-day window.

Who This Affects and Who’s Exempt

The new Anthropic AI training policy applies specifically to consumer users including Claude Free, Pro, and Max subscribers. Business customers utilizing Claude Gov, Claude for Work, Claude for Education, or API access remain unaffected by these changes. This exemption pattern mirrors OpenAI’s approach to protecting enterprise data.

Why Anthropic Changed Its AI Training Approach

Anthropic officially frames these changes around user choice and model improvement. The company states that user data helps enhance safety systems and improve detection accuracy for harmful content. Additionally, the data contributes to better coding, analysis, and reasoning capabilities in future Claude models.

Competitive Pressures Behind Anthropic AI Training

The reality behind these changes involves substantial competitive pressures in the AI industry. Like other large language model companies, Anthropic requires vast amounts of high-quality conversational data to remain competitive against rivals such as OpenAI and Google. Access to millions of real-world interactions provides crucial training material.

Industry-Wide Data Policy Shifts

These changes reflect broader industry trends as AI companies face increasing scrutiny over data retention practices. OpenAI currently battles a court order requiring indefinite retention of consumer ChatGPT conversations due to ongoing litigation with publishers. This legal landscape influences how all AI companies approach data management.

Read Also: ChatGPT Revolution: The Complete 2025 Guide to OpenAI’s Game-Changing AI Chatbot

User Awareness and Implementation Concerns

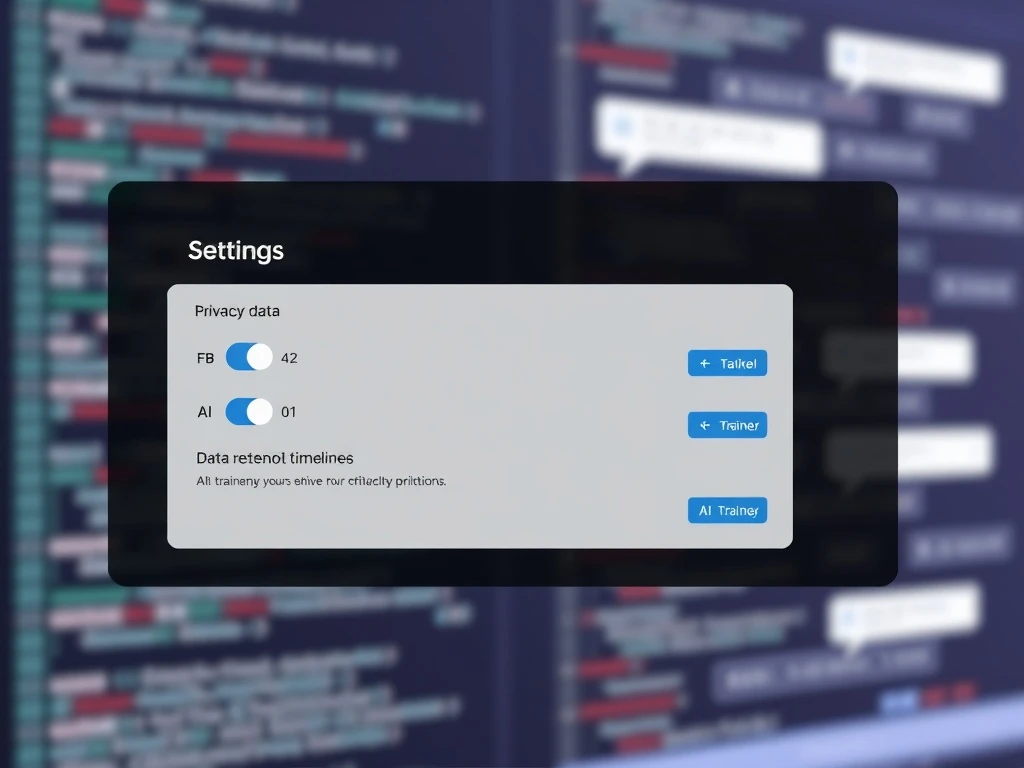

The implementation of Anthropic’s new AI training policy raises significant concerns about user awareness. Existing users encounter a pop-up with large “Accept” text and a much smaller toggle switch automatically set to “On” for data sharing. This design potentially leads users to consent without fully understanding the implications.

Regulatory Scrutiny and Privacy Considerations

Privacy experts consistently warn about the challenges of obtaining meaningful consent in complex AI systems. The Federal Trade Commission has previously cautioned companies against surreptitious policy changes or burying disclosures in fine print. However, enforcement remains uncertain given current commission staffing levels.

Frequently Asked Questions

What is the deadline for opting out of Anthropic’s data training?

Users must make their decision by September 28, 2023. After this date, the new policy terms will apply based on user selections.

How does this affect business customers?

Business customers using Claude Gov, Claude for Work, Claude for Education, or API access remain completely unaffected by these policy changes.

What happens if I don’t make a selection?

If users take no action, their data will automatically be used for AI training and retained for five years under the new default settings.

Can I change my decision later?

Anthropic’s current implementation suggests this is a one-time decision, though the company may offer future adjustment options.

How does this compare to OpenAI’s policies?

Both companies now follow similar patterns: consumer data may be used for training while enterprise data receives stronger protections.

What specific data gets used for training?

The policy covers conversations and coding sessions, which Anthropic claims helps improve model safety and capability development.